blogs

blogs

Why will LEMs become key in next generation engineering?

An article by Monumo CTO, Jaroslaw Rzepecki, looking at the next big evolution in engineering design over the next 5 years; Large Engineering Models and what they will mean for every complex system that engineers worry about today.

Designing engineering solutions to a given requirement is usually an expensive and time-consuming process. The cost and time required grow with the complexity of the system being designed and with the number of requirements the system needs to fulfill.

Imagine that I ask you to design me a chair – you will probably be able to very quickly sketch a simple design on either a piece of paper or on a system such as CAD. The effort required would be relatively low, the task relatively simple, and there were no constraints added. But what if I asked you to design me not just a chair but an office chair; one that can bear a load up to 250kg, costs less than $100 and uses 90% of recycled materials? What if I also asked for the height of the chair to be adjustable with an electric motor? Suddenly this becomes a considerably more complicated request. Without significant expertise (possibly spanning multiple domains – materials, health and safety, electric motor application, etc…) and good design tools at your disposal, you would probably struggle to deliver against the brief.

Even if you had the right expertise (or team of people with the right expertise) and industry standard design tools, the process would still take time and would likely require a few design iterations before the final product is complete. Further, imagine you are an experienced chair designer, but in order to reduce the design time and reduce the risk of the final design being faulty in some way, you will be inclined to deliver a design of the office chair that is very similar to the designs you’ve produced in the past – you will take a design you know works and modify it to fulfill my additional requirements. The complexity of the task, and the tight design time constraints push you, as a designer, towards solutions that are not too innovative and are just a small step improvement of the old designs.

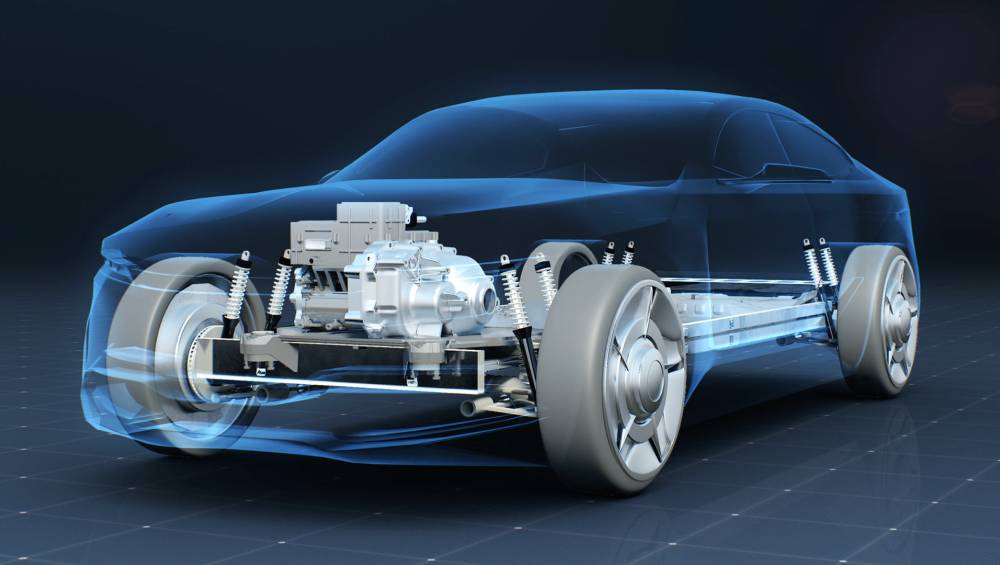

We are surrounded, today, by numerous valuable and critical engineering systems which are far more complex than my simple chair example, and often involve multiple components working together as a system. It becomes extremely difficult to design those system level components to fulfill an ever-growing list of tight and critical requirements, like cost, recyclability, performance, and sustainability.

However, solutions are starting to emerge that can, for the first time, truly tackle these complexities and often conflicting design requirements found within complex engineering systems. Large Engineering Models (LEMs) are the machine learning era answer to this design challenge, providing engineers and designers with a tool that allows them to explore new unintuitive designs at a fraction of the time and cost of the traditional approach.

To understand what LEMs are (or will be in the future) and how they will revolutionise engineering design let's first have a look at what generative AI models are. On a high level, a generative model is a “black box” that can generate new data that resembles a given data set. During the training process the generative model learns a distribution of the training data set, and at generation time it samples from that distribution to produce new data. The difference between simple linear regression and modern generative AI models like ChatGPT and DALL-e2 is in the complexity of the data distribution that needs to be learned and amounts of data needed to learn it. It is easy to figure out what is the data distribution of five points on a straight line; it is much harder to figure out what is a distribution of all possible pixel arrangements that make up a photograph of a cat, or what is a distribution of words that could follow up in a sentence given all the previous words written. The technical details of how training data is used to learn the distribution, how to add extra constrains on the distribution, and how to sample from it at the end, is what distinguishes different generative model approaches like VAEs (variational auto-encoders), GANs (generative adversarial networks), energy and diffusion-based models. Considerable amounts of research has already been dedicated to making the training of generative models more stable, more data efficient and on imposing useful properties on the structure of the learned data distribution (i.e. so that sampling from the distribution can be conditioned on additional information, for example a generative model of human faces can take additional requirements like hair color, presence of sun glasses or a hat into account and generate a face with or without those features).

LEMs aim to learn a design distribution of a given engineering system and then use it in a generative manner (GLEM – Generative Large Engineering Models) to propose new designs conditioned on the requirements given. Going back to our initial example of an office chair – we can use a LEM to learn the design space of chairs from a large volume of diverse chair data, that includes both the designs and properties of those designs (i.e. weight, materials, cost, etc…). The understanding of what makes a structurally sound chair, what affects its cost, and the general understanding of the laws of physics will give the model an edge in learning a proper design distribution that generalises well, we therefore expect this understanding to emerge through the training process.

There is one big difference between LEMs and text/image generative AI like ChatGPT – engineering is much more objective than text and image generation. While it may be difficult to sometimes judge if a given piece of generated text is “good” or not, it is much easier to evaluate an engineering design. Engineering designs must fulfill the laws of physics and the claims of the generative LEM model can be verified against precise computations. The laws of physics can also help the LEMs to learn the design distribution, they therefore need to be included in the training process. Fortunately, the area of PINNs (physically informed neural networks) is under active development by the ML community and provides a way of adding physics knowledge into model training. The combination of large volumes of diverse design data together with clever inclusion of the laws of physics into the training process will provide LEMs with necessary power of generalisation to generate unintuitive designs.

At Monumo we focus on building the data needed to train LEMs for electric motor powertrains and on developing training methods that combine that data with Deep Neural Networks in order to generate electric motor powertrains that are best for given applications. The methods we develop can be easily adopted by other engineering solutions, starting with power generators and in the end including the most complex engineering systems. At each step of this journey, we provide models that can significantly speed up design iteration and provide solutions needed by our customers.